# Visual Question Answering

Fresh Picks

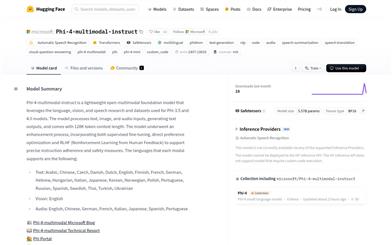

Phi 4 Multimodal Instruct

Phi-4-multimodal-instruct is a multimodal foundational model developed by Microsoft, supporting text, image, and audio inputs to generate text outputs. Built upon the research and datasets of Phi-3.5 and Phi-4.0, the model has undergone supervised fine-tuning, direct preference optimization, and reinforcement learning from human feedback to improve instruction following and safety. It supports multilingual text, image, and audio inputs, features a 128K context length, and is applicable to various multimodal tasks such as speech recognition, speech translation, and visual question answering. The model demonstrates significant improvements in multimodal capabilities, particularly excelling in speech and vision tasks. It provides developers with powerful multimodal processing capabilities for building a wide range of multimodal applications.

AI Model

53.3K

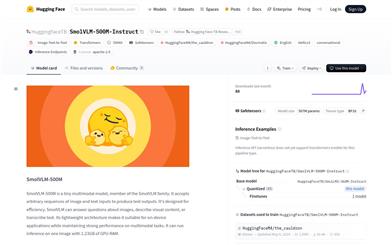

Smolvlm 500M Instruct

SmolVLM-500M, developed by Hugging Face, is a lightweight multimodal model that belongs to the SmolVLM series. Based on the Idefics3 architecture, it focuses on efficient image and text processing tasks. The model can accept image and text inputs in any order and generate text outputs, making it suitable for tasks such as image description and visual question answering. Its lightweight design allows it to operate on resource-constrained devices while maintaining strong performance in multimodal tasks. The model is licensed under the Apache 2.0 license, enabling open-source and flexible usage scenarios.

AI Model

60.2K

Omagent.com

OmAgent is a multimodal native agent framework used for smart devices and similar applications. It employs a divide-and-conquer algorithm to efficiently solve complex tasks, capable of preprocessing long videos and answering questions with human-like precision. Additionally, it can provide personalized clothing suggestions based on user requests and optional weather conditions. Currently, the official website does not specify pricing, but the features are primarily targeted towards users who require efficient task processing and intelligent interactions, such as developers and businesses.

Smart Body

46.4K

Paligemma2 3b Pt 224

Developed by Google, PaliGemma 2 is a vision-language model that combines the capabilities of the SigLIP visual model and the Gemma 2 language model. It is capable of processing both image and text inputs to generate corresponding text outputs. This model excels in various vision-language tasks such as image description and visual question answering. Its main advantages include robust multilingual support, an efficient training architecture, and outstanding performance across diverse tasks. PaliGemma 2 was developed to tackle complex interactions between vision and language, aiding researchers and developers in achieving breakthroughs in their respective fields.

AI Model

46.1K

Paligemma2 3b Pt 448

PaliGemma 2 is a vision-language model developed by Google, inheriting the capabilities of the Gemma 2 model, enabling it to handle image and text inputs to generate text outputs. The model excels in various visual language tasks such as image description and visual question answering. Its main advantages include robust multilingual support, an efficient training architecture, and extensive applicability. This model is suitable for a wide range of applications that require processing visual and textual data, such as social media content generation and intelligent customer service.

AI Model

43.3K

Internvl2 5 26B MPO

InternVL2_5-26B-MPO is a multimodal large language model (MLLM) that builds upon InternVL2.5 and improves model performance through Mixed Preference Optimization (MPO). The model can handle multimodal data, including images and text, and is widely applied in scenarios such as image captioning and visual question answering. Its significance lies in its ability to understand and generate text closely related to image content, pushing the boundaries of multimodal AI. Background information on the product includes its exceptional performance in multimodal tasks and evaluation results on the OpenCompass Leaderboard. This model provides researchers and developers with a powerful tool to explore and realize the potential of multimodal AI.

AI Model

49.1K

Internvl2 5 1B MPO

InternVL2_5-1B-MPO is a multimodal large language model (MLLM) built on InternVL2.5 and Mixed Preference Optimization (MPO), showcasing superior overall performance. This model integrates incrementally pre-trained InternViT with various pre-trained large language models (LLMs), including InternLM 2.5 and Qwen 2.5, utilizing a randomly initialized MLP projector. InternVL2.5-MPO retains the ‘ViT-MLP-LLM’ paradigm from InternVL 2.5 and its predecessors while introducing support for multiple images and video data. The model excels in multimodal tasks, capable of handling a variety of visual-language tasks including image captioning and visual question answering.

AI Model

55.8K

Deepseek VL2 Small

DeepSeek-VL2 is a series of advanced large-scale mixture of experts (MoE) visual language models, significantly improved compared to its predecessor DeepSeek-VL. This model series demonstrates exceptional capabilities across various tasks, including visual question answering, optical character recognition, document/table/chart understanding, and visual localization. Comprising three variants: DeepSeek-VL2-Tiny, DeepSeek-VL2-Small, and DeepSeek-VL2, with 1 billion, 2.8 billion, and 4.5 billion active parameters respectively, DeepSeek-VL2 achieves competitive or state-of-the-art performance against existing dense and MoE-based open-source models, even with a similar or fewer number of active parameters.

AI Model

55.2K

Deepseek VL2

DeepSeek-VL2 is a series of large Mixture-of-Experts visual language models, showing significant improvements over its predecessor, DeepSeek-VL. This series exhibits exceptional performance in tasks such as visual question answering, optical character recognition, document/table/chart understanding, and visual localization. DeepSeek-VL2 includes three variants: DeepSeek-VL2-Tiny, DeepSeek-VL2-Small, and DeepSeek-VL2, with 1.0B, 2.8B, and 4.5B active parameters, respectively. Compared to existing open-source dense and MoE base models with similar or fewer active parameters, DeepSeek-VL2 achieves competitive or state-of-the-art performance.

AI Model

66.5K

Pixtral 12B 2409

Pixtral-12B-2409 is a multimodal model developed by the Mistral AI team, featuring a 12 billion parameter multimodal decoder and a 400 million parameter visual encoder. The model excels in multimodal tasks, supports images of varying sizes, and maintains cutting-edge performance on text benchmarks. It is suitable for advanced applications requiring the processing of image and text data, such as image description generation and visual question answering.

AI image generation

51.1K

Videollama2 7B

Developed by the DAMO-NLP-SG team, VideoLLaMA2-7B is a multimodal large language model focused on video content understanding and generation. This model demonstrates significant performance in video question answering and video captioning, capable of handling complex video content and generating accurate and natural language descriptions. It has been optimized for spatio-temporal modeling and audio understanding, providing powerful support for intelligent analysis and processing of video content.

AI video generation

72.0K

Videollama2 7B Base

VideoLLaMA2-7B-Base, developed by DAMO-NLP-SG, is a large video language model focused on understanding and generating video content. This model demonstrates exceptional performance in visual question answering and video captioning. Through advanced spatiotemporal modeling and audio understanding capabilities, it provides users with a new tool for analyzing video content. Based on the Transformer architecture, it can process multi-modal data, combining textual and visual information to generate accurate and insightful outputs.

AI video generation

75.9K

Idefics 80b

HuggingFaceM4/idefics-80b-instruct is an open-source multimodal model that can accept both image and text input and generate relevant text output. It excels in tasks like visual question answering and image description, making it a versatile intelligent assistant model. Developed by the Hugging Face team, it's trained on open datasets and is available for free use.

AI Model

63.5K

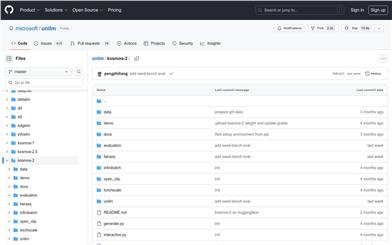

Kosmos 2

Kosmos-2 is a multi-modal large language model that can associate natural language with various input forms like images and videos. It can be used for tasks such as phrase localization, referential understanding, referential expression generation, image description, and visual question answering. Kosmos-2 is trained and evaluated using the GRIT dataset, which contains a large amount of image-text pairs. Kosmos-2's strength lies in its ability to associate natural language with visual information, thereby enhancing model performance.

AI Model

54.9K

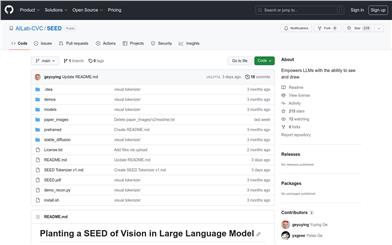

SEED

SEED is a large-scale pre-trained model that, through pre-training and guided fine-tuning on interwoven text and visual data, demonstrates outstanding performance in a wide range of multi-modal understanding and generation tasks. SEED also possesses emerging combinatorial capabilities, such as multi-turn contextual multi-modal generation, much like your AI assistant. SEED also includes SEED Tokenizer v1 and SEED Tokenizer v2, which can convert text into images.

AI Model

59.3K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.5K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.7K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M